Synthetic Data Generation#

Generate synthetic data with the generators listed below Based on the Wisconsin Breast Cancer Dataset (WBCD)

[20]:

# Standard library

import sys

from pathlib import Path

sys.path.append("..")

# 3rd party packages

import matplotlib.pyplot as plt

import pandas as pd

# Local packages

from clover.generators import (

Generator,

DataSynthesizerGenerator,

SynthpopGenerator,

SmoteGenerator,

TVAEGenerator,

CTGANGenerator,

FinDiffGenerator,

MSTGenerator,

CTABGANGenerator,

)

import clover.utils.draw as draw

from clover.utils.standard import create_directory

Load the real WBCD training dataset#

[21]:

PROJECT_PATH = Path(".")

OUTPUT_PATH = PROJECT_PATH / "output"

[22]:

df_real_train = pd.read_csv("data/breast_cancer_wisconsin_train.csv")

df_real_test = pd.read_csv("data/breast_cancer_wisconsin_test.csv")

df_real_train.shape, df_real_test.shape

[22]:

((359, 10), (90, 10))

[23]:

df_real_train.head()

[23]:

| Clump_Thickness | Uniformity_of_Cell_Size | Uniformity_of_Cell_Shape | Marginal_Adhesion | Single_Epithelial_Cell_Size | Bare_Nuclei | Bland_Chromatin | Normal_Nucleoli | Mitoses | Class | |

|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 10 | 10 | 10 | 3 | 10 | 10 | 9 | 10 | 1 | 1 |

| 1 | 5 | 1 | 2 | 1 | 2 | 1 | 1 | 1 | 1 | 0 |

| 2 | 6 | 10 | 2 | 8 | 10 | 2 | 7 | 8 | 10 | 1 |

| 3 | 4 | 8 | 6 | 4 | 3 | 4 | 10 | 6 | 1 | 1 |

| 4 | 7 | 4 | 7 | 4 | 3 | 7 | 7 | 6 | 1 | 1 |

Create the metadata dictionary#

The continuous and categorical variables need to be specified, as well as the variable to predict for the future learning task (used by SMOTE)#

[24]:

metadata = {

"continuous": [

"Clump_Thickness",

"Uniformity_of_Cell_Size",

"Uniformity_of_Cell_Shape",

"Marginal_Adhesion",

"Single_Epithelial_Cell_Size",

"Bland_Chromatin",

"Normal_Nucleoli",

"Mitoses",

"Bare_Nuclei",

],

"categorical": ["Class"],

"variable_to_predict": "Class",

}

Choose the generator#

[25]:

generator = "synthpop" # to choose among this list: ["synthpop", "smote", "datasynthesizer", "mst", "ctgan", "tvae", "ctabgan", "findiff"]

dp = False

Synthpop#

[26]:

if generator == "synthpop":

if not dp:

gen = SynthpopGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator,

variables_order=None, # use the dataframe columns order by default

)

else:

gen = SynthpopGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator,

variables_order=None, # use the dataframe columns order by default

epsilon=10,

max_depth=5,

)

SMOTE#

[27]:

if generator == "smote":

if not dp:

gen = SmoteGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator,

k_neighbors=None, # cannot be found by searching the best hyperparameters yet, set to 5 by default

)

else:

gen = SmoteGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator,

k_neighbors=5, # cannot be found by searching the best hyperparameters yet, set to 5 by default

epsilon=10,

)

Datasynthesizer#

[28]:

if generator == "datasynthesizer":

if not dp:

gen = DataSynthesizerGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

candidate_keys=None, # the identifiers

epsilon=0, # for the differential privacy

degree=2, # the maximal number of parents for the bayesian network

)

else:

gen = DataSynthesizerGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

candidate_keys=None, # the identifiers

epsilon=10, # for the differential privacy

degree=2, # the maximal number of parents for the bayesian network

)

MST#

[29]:

# MST includes DP by design, hence non-dp mode is not available

if generator == "mst":

gen = MSTGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

epsilon=10, # the privacy budget of the differential privacy

delta=1e-9, # the failure probability of the differential privacy

)

CTGAN#

[30]:

if generator == "ctgan":

if not dp:

gen = CTGANGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

discriminator_steps=4, # the number of discriminator updates to do for each generator update

epochs=300, # the number of training epochs

batch_size=100, # the batch size for training

)

else:

gen = CTGANGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

discriminator_steps=4, # the number of discriminator updates to do for each generator update

epochs=300, # the number of training epochs

batch_size=100, # the batch size for training

epsilon=10,

delta=1e-5,

)

TVAE#

[31]:

if generator == "tvae":

if not dp:

gen = TVAEGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

epochs=300, # the number of training epochs

batch_size=100, # the batch size for training

compress_dims=(249, 249), # the size of the hidden layers in the encoder

decompress_dims=(249, 249), # the size of the hidden layers in the decoder

)

else:

gen = TVAEGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

epochs=300, # the number of training epochs

batch_size=100, # the batch size for training

compress_dims=(249, 249), # the size of the hidden layers in the encoder

decompress_dims=(249, 249), # the size of the hidden layers in the decoder

epsilon=10,

delta=1e-5,

)

CTAB-GAN+#

[32]:

if generator == "ctabgan":

if not dp:

gen = CTABGANGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

mixed_columns=None, # dictionary of "mixed" column names with corresponding categorical modes

log_columns=None, # list of skewed exponential numerical columns

integer_columns=metadata[

"continuous"

], # list of numeric columns without floating numbers

class_dim=(

256,

256,

256,

256,

), # size of each desired linear layer for the auxiliary classifier

random_dim=100, # dimension of the noise vector fed to the generator

num_channels=64, # number of channels in the convolutional layers of both the generator and the discriminator

l2scale=1e-5, # rate of weight decay used in the optimizer of the generator, discriminator and auxiliary classifier

batch_size=150, # batch size for training

epochs=500, # number of training epochs

)

else:

gen = CTABGANGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

mixed_columns=None, # dictionary of "mixed" column names with corresponding categorical modes

log_columns=None, # list of skewed exponential numerical columns

integer_columns=metadata[

"continuous"

], # list of numeric columns without floating numbers

class_dim=(

32,

32,

32,

32,

), # size of each desired linear layer for the auxiliary classifier

random_dim=10, # dimension of the noise vector fed to the generator

num_channels=8, # number of channels in the convolutional layers of both the generator and the discriminator

l2scale=1e-5, # rate of weight decay used in the optimizer of the generator, discriminator and auxiliary classifier

batch_size=150, # batch size for training

epochs=10, # number of training epochs

epsilon=10,

delta=1e-5,

)

FinDiff#

[33]:

if generator == "findiff":

if not dp:

gen = FinDiffGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

learning_rate=1e-4, # the learning rate for training

batch_size=512, # the batch size for training and sampling

diffusion_steps=500, # the diffusion timesteps for the forward diffusion process

epochs=500, # the training iterations

mpl_layers=[1024, 1024, 1024, 1024], # the width of the MLP layers

activation="lrelu", # the activation fuction

dim_t=64, # dimensionality of the intermediate layer for connecting the embeddings

cat_emb_dim=2, # dimension of categorical embeddings

diff_beta_start_end=[1e-4, 0.02], # diffusion start and end betas

scheduler="linear", # diffusion scheduler

)

else:

gen = FinDiffGenerator(

df=df_real_train,

metadata=metadata,

random_state=66, # for reproducibility, can be set to None

generator_filepath=None, # to load an existing generator

learning_rate=1e-4, # the learning rate for training

batch_size=512, # the batch size for training and sampling

diffusion_steps=500, # the diffusion timesteps for the forward diffusion process

epochs=500, # the training iterations

mpl_layers=[1024, 1024, 1024, 1024], # the width of the MLP layers

activation="lrelu", # the activation fuction

dim_t=64, # dimensionality of the intermediate layer for connecting the embeddings

cat_emb_dim=2, # dimension of categorical embeddings

diff_beta_start_end=[1e-4, 0.02], # diffusion start and end betas

scheduler="linear", # diffusion scheduler

epsilon=1, # value for DP parameter (delta is at default value 1e-5)

)

Fit the generator to the real data#

[34]:

create_directory(path="../results/generators") # create path if doesn't exist

gen.preprocess()

gen.fit(save_path="../results/generators") # the path should exist

Display the fitted generator#

[35]:

gen.display()

Constructed sequential trees:

Clump_Thickness has parents []

Uniformity_of_Cell_Size has parents ['Clump_Thickness']

Uniformity_of_Cell_Shape has parents ['Clump_Thickness', 'Uniformity_of_Cell_Size']

Marginal_Adhesion has parents ['Clump_Thickness', 'Uniformity_of_Cell_Size', 'Uniformity_of_Cell_Shape']

Single_Epithelial_Cell_Size has parents ['Clump_Thickness', 'Uniformity_of_Cell_Size', 'Uniformity_of_Cell_Shape', 'Marginal_Adhesion']

Bare_Nuclei has parents ['Clump_Thickness', 'Uniformity_of_Cell_Size', 'Uniformity_of_Cell_Shape', 'Marginal_Adhesion', 'Single_Epithelial_Cell_Size']

Bland_Chromatin has parents ['Clump_Thickness', 'Uniformity_of_Cell_Size', 'Uniformity_of_Cell_Shape', 'Marginal_Adhesion', 'Single_Epithelial_Cell_Size', 'Bare_Nuclei']

Normal_Nucleoli has parents ['Clump_Thickness', 'Uniformity_of_Cell_Size', 'Uniformity_of_Cell_Shape', 'Marginal_Adhesion', 'Single_Epithelial_Cell_Size', 'Bare_Nuclei', 'Bland_Chromatin']

Mitoses has parents ['Clump_Thickness', 'Uniformity_of_Cell_Size', 'Uniformity_of_Cell_Shape', 'Marginal_Adhesion', 'Single_Epithelial_Cell_Size', 'Bare_Nuclei', 'Bland_Chromatin', 'Normal_Nucleoli']

Class has parents ['Clump_Thickness', 'Uniformity_of_Cell_Size', 'Uniformity_of_Cell_Shape', 'Marginal_Adhesion', 'Single_Epithelial_Cell_Size', 'Bare_Nuclei', 'Bland_Chromatin', 'Normal_Nucleoli', 'Mitoses']

Generate the synthetic data#

[36]:

create_directory(path="../results/data") # create path if doesn't exist

df_synth_train = gen.sample(

save_path="../results/data", # the path should exist

num_samples=len(

df_real_train

), # can be different from the real data, but for computing the utility metrics should be the same

)

df_synth_test = gen.sample(

save_path="../results/data", # the path should exist

num_samples=len(

df_real_test

), # can be different from the real data, but for computing the utility metrics should be the same

)

[37]:

df_synth_train.head()

[37]:

| Clump_Thickness | Uniformity_of_Cell_Size | Uniformity_of_Cell_Shape | Marginal_Adhesion | Single_Epithelial_Cell_Size | Bare_Nuclei | Bland_Chromatin | Normal_Nucleoli | Mitoses | Class | |

|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 1 | 1 | 1 | 2 | 1 | 2 | 2 | 1 | 0 |

| 1 | 10 | 4 | 3 | 6 | 6 | 10 | 7 | 6 | 2 | 1 |

| 2 | 9 | 10 | 10 | 8 | 5 | 10 | 4 | 7 | 1 | 1 |

| 3 | 10 | 8 | 8 | 7 | 10 | 10 | 4 | 8 | 10 | 1 |

| 4 | 1 | 4 | 2 | 10 | 3 | 7 | 5 | 6 | 2 | 0 |

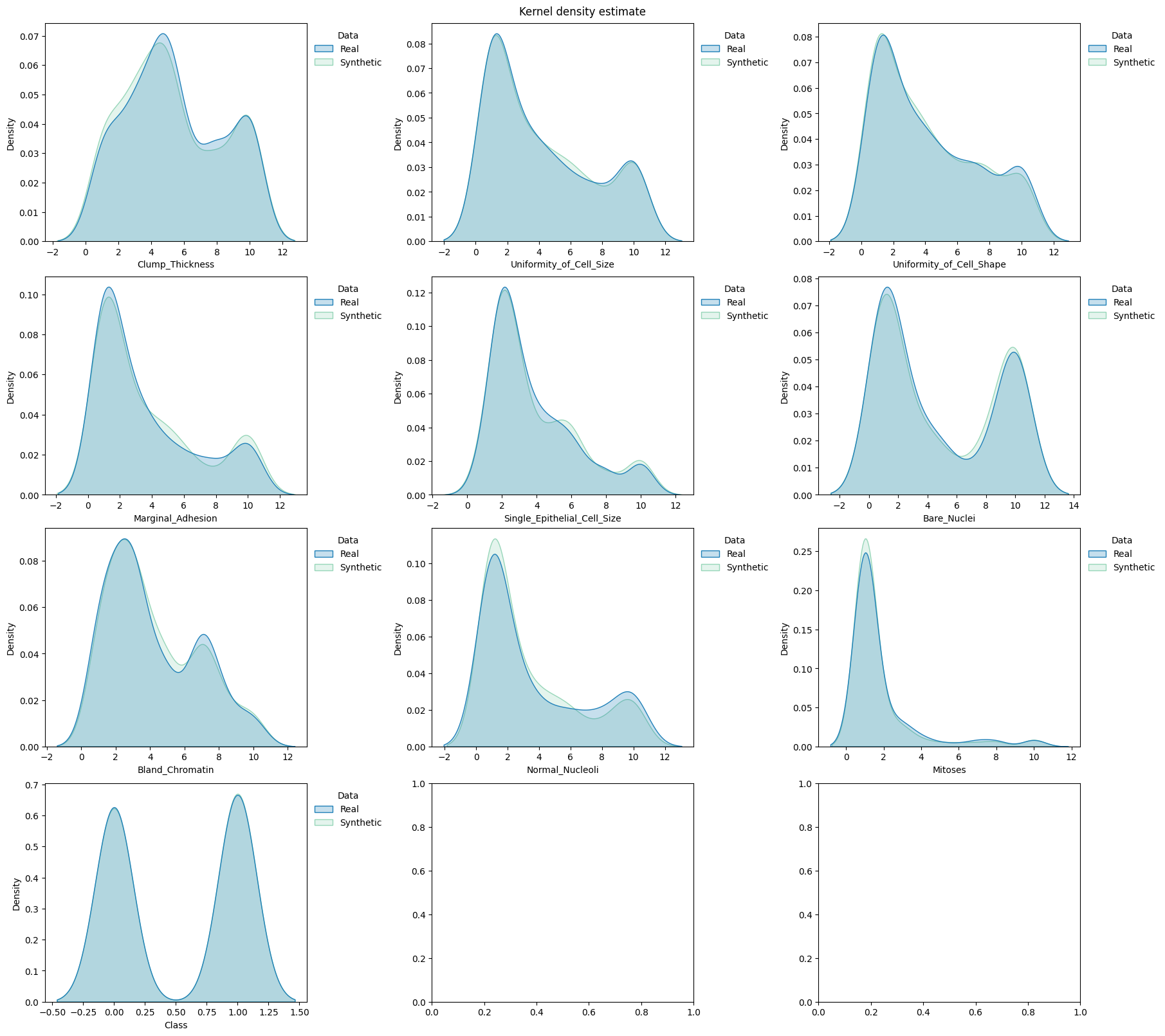

[38]:

fig, axes = plt.subplots( # manually set number of cols/rows

nrows=4, ncols=3, squeeze=0, figsize=(18, 16), layout="constrained"

)

axes = axes.reshape(-1)

draw.kde_plot_hue_plot_per_col(

df=df_real_train,

df_nested=df_synth_train,

original_name="Real",

nested_name="Synthetic",

hue_name="Data",

title="Kernel density estimate",

axes=axes,

)